Interobserver variability impairs radiologic grading of primary graft dysfunction after lung transplantation - ScienceDirect

Interobserver and Intraobserver Variability of Interpretation of CT-angiography in Patients with a Suspected Abdominal Aortic Aneurysm Rupture - ScienceDirect

Interobserver variability for the first evaluation of the two halves of... | Download Scientific Diagram

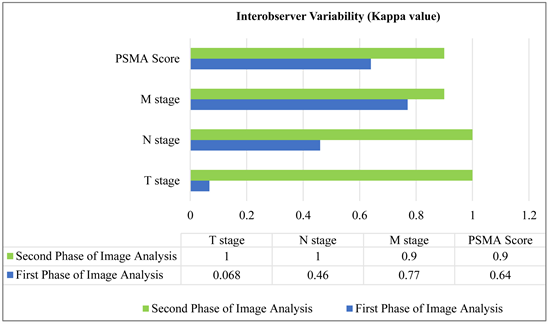

Inter-Observer Variability in the Interpretation of 68Ga-PSMA PET-CT Scan according to PROMISE Criteria

Inter-observer agreement and reliability assessment for observational studies of clinical work - ScienceDirect

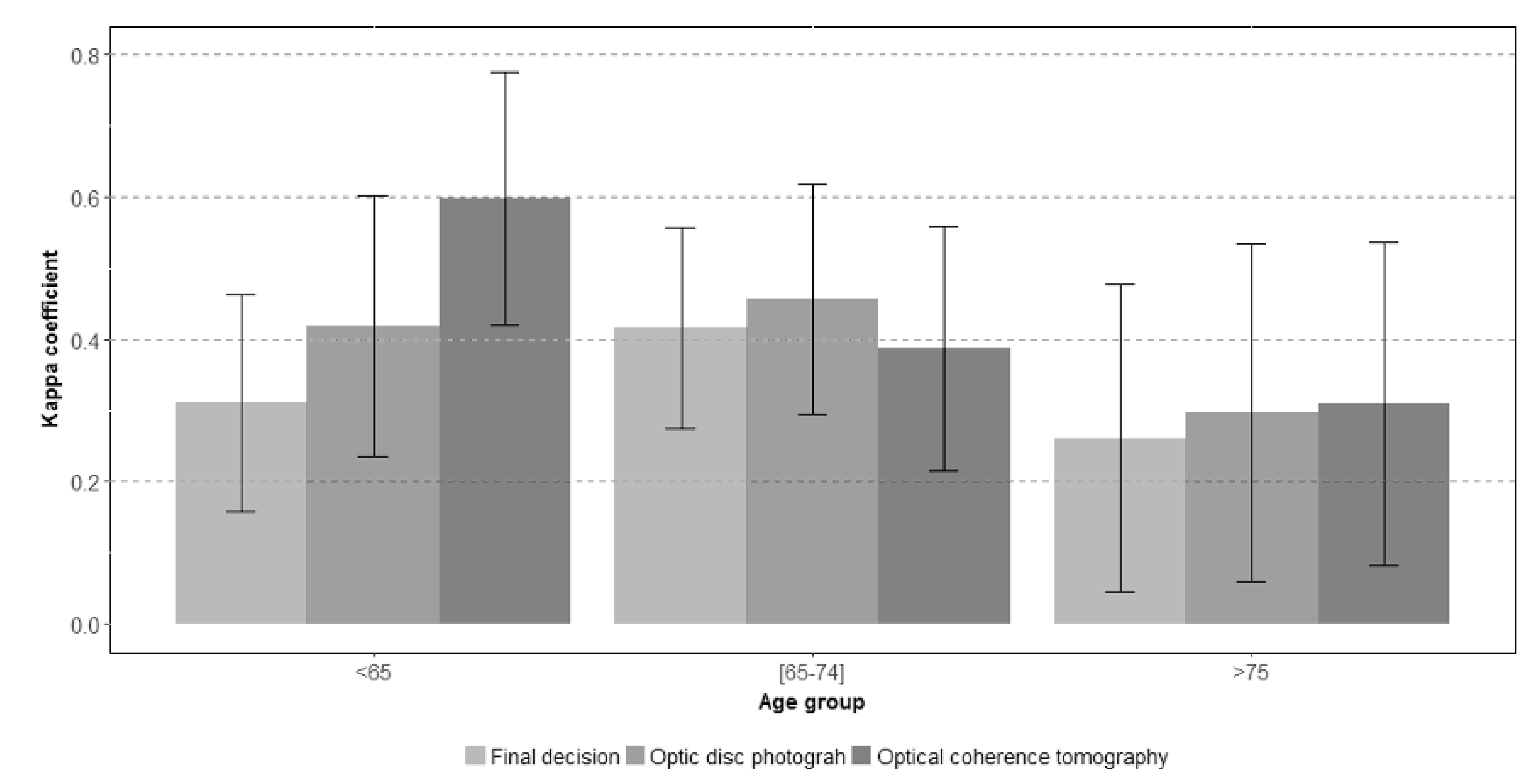

JCM | Free Full-Text | Interobserver and Intertest Agreement in Telemedicine Glaucoma Screening with Optic Disk Photos and Optical Coherence Tomography

Interobserver variability in the interpretation of computed tomography following aneurysmal subarachnoid hemorrhage in: Journal of Neurosurgery Volume 115 Issue 6 (2011) Journals

Inter-observer variability (kappa) for the different Clinical Pulmonary... | Download Scientific Diagram

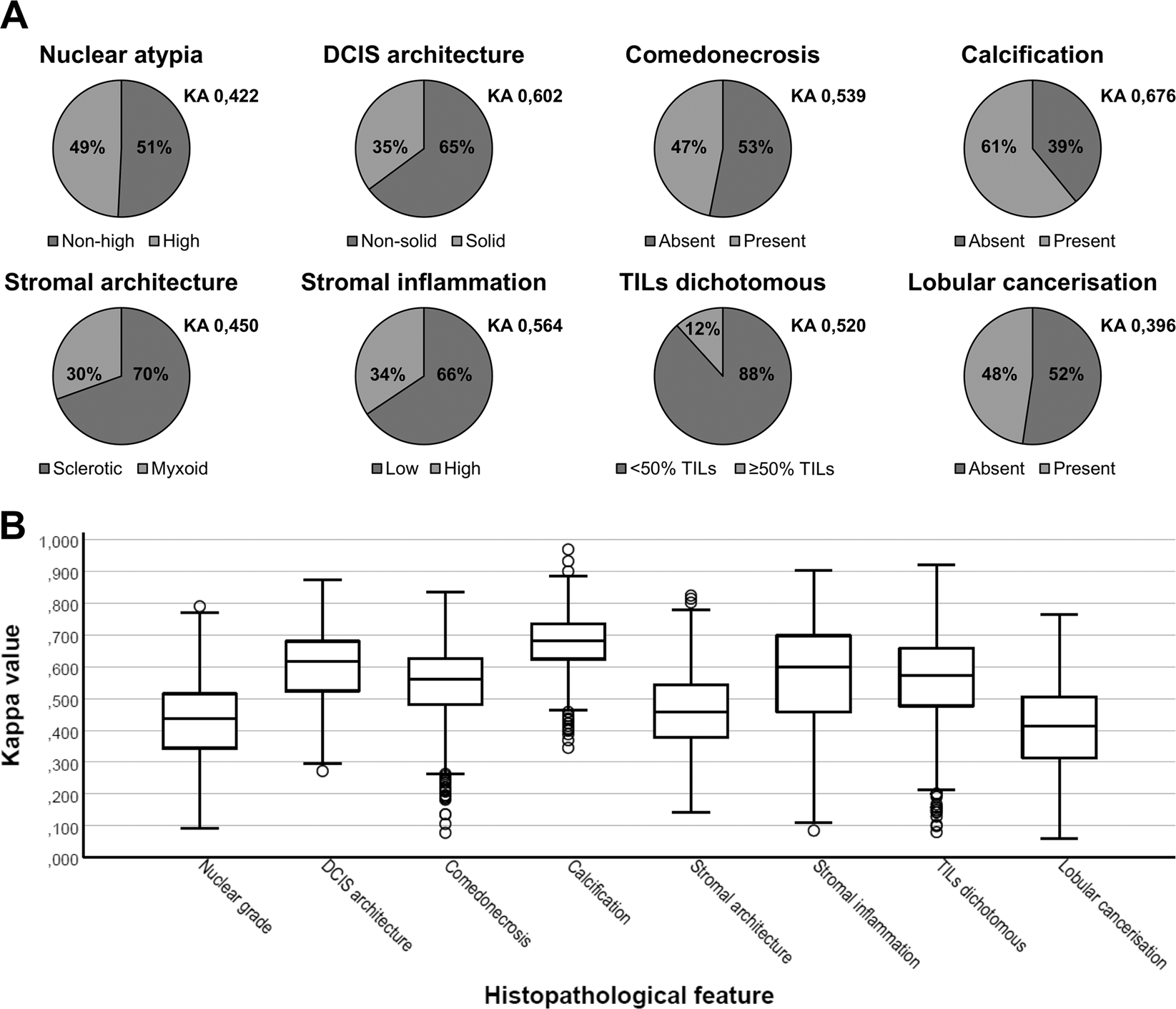

Interobserver variability in upfront dichotomous histopathological assessment of ductal carcinoma in situ of the breast: the DCISion study | Modern Pathology

Accuracy of the Interpretation of Chest Radiographs for the Diagnosis of Paediatric Pneumonia | PLOS ONE

Intra and Interobserver Reliability and Agreement of Semiquantitative Vertebral Fracture Assessment on Chest Computed Tomography | PLOS ONE

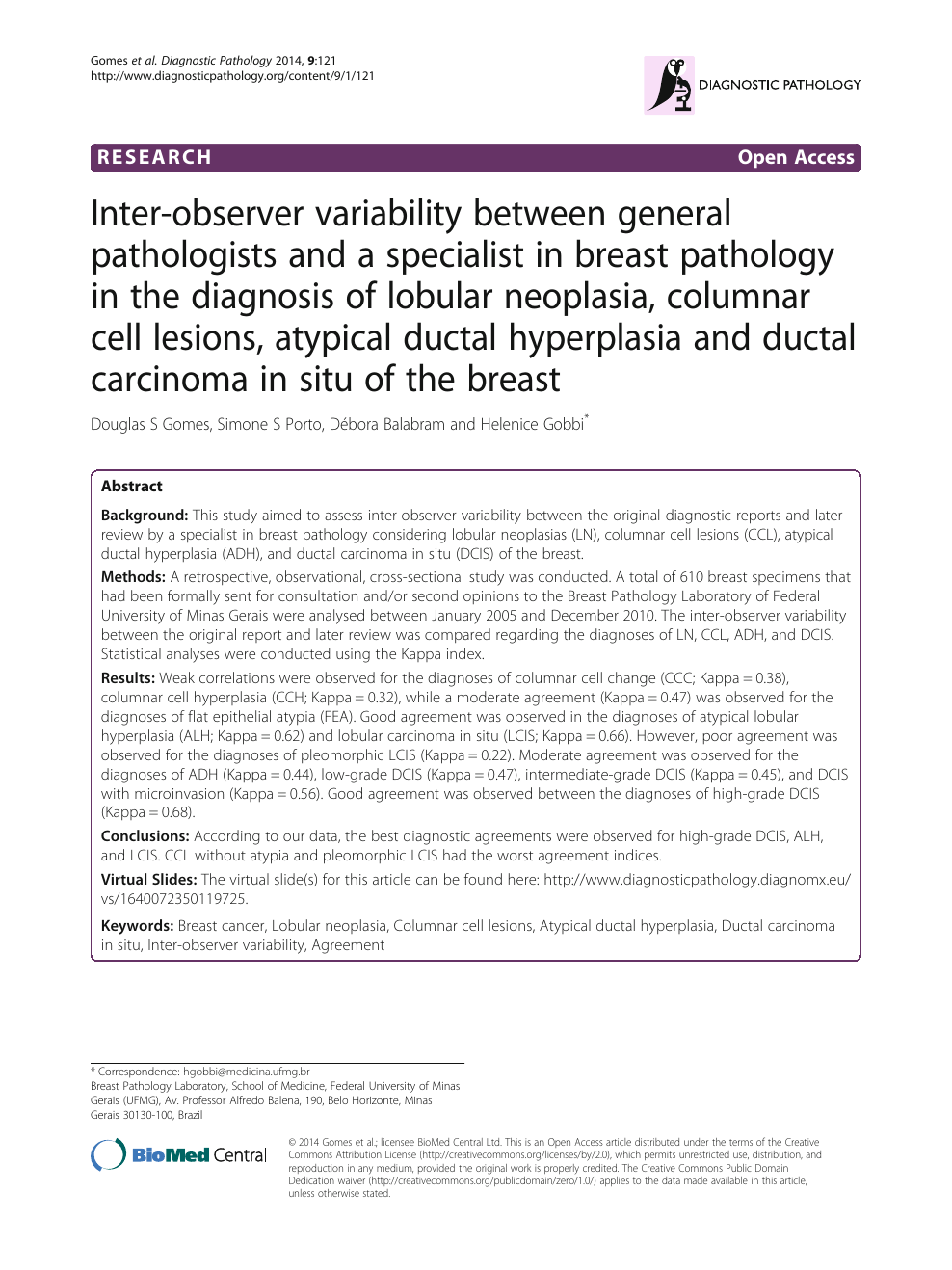

Inter-observer variability between general pathologists and a specialist in breast pathology in the diagnosis of lobular neoplasia, columnar cell lesions, atypical ductal hyperplasia and ductal carcinoma in situ of the breast –

Inter-observer variation can be measured in any situation in which two or more independent observers are evaluating the same thing Kappa is intended to. - ppt download

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/3-Table2-1.png)

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/2-Table1-1.png)